2023 CITRIS Seed Award

Safety guarantees and robustness quantification of generative AI models

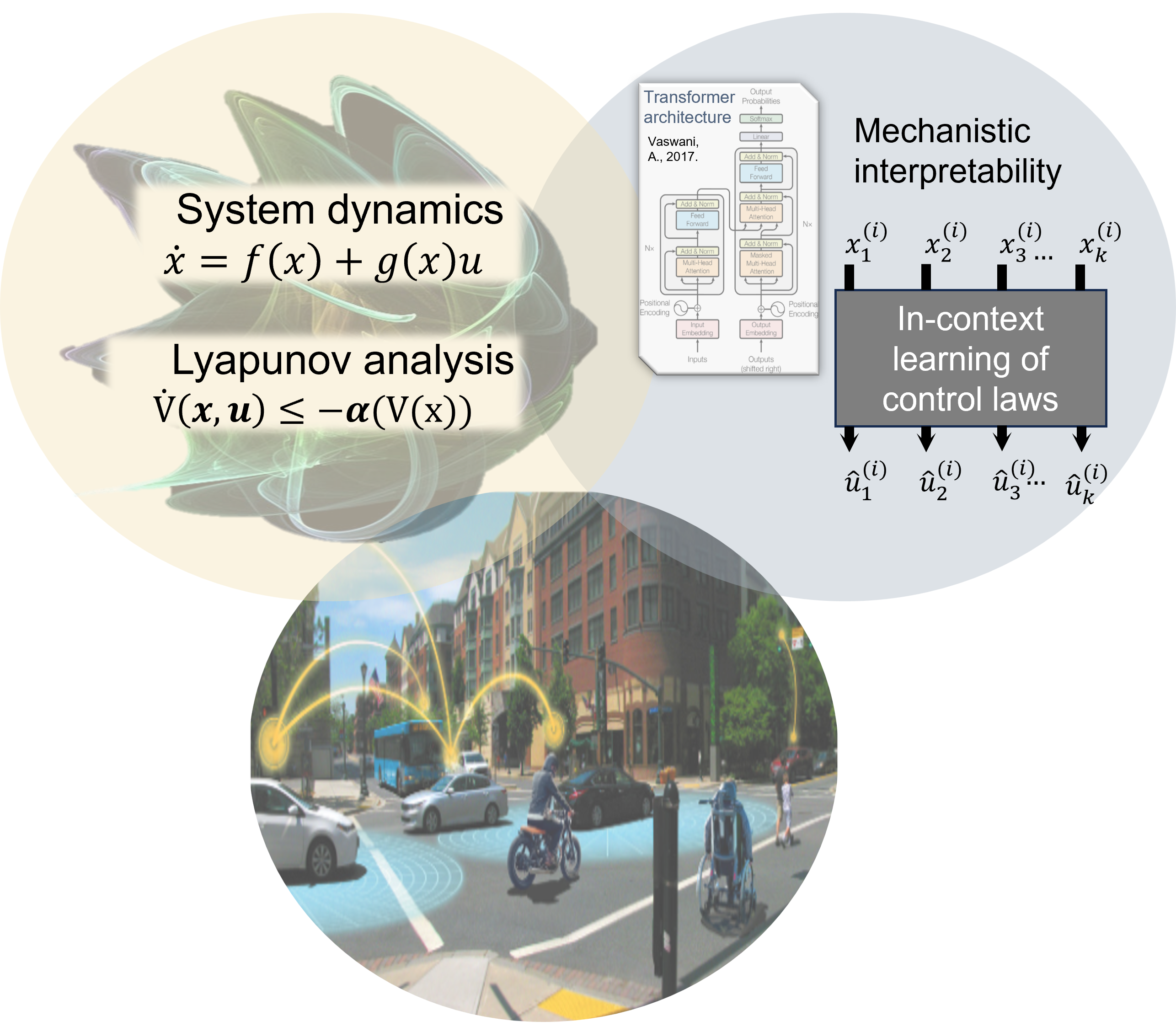

This project studies safety guarantees for transformer-based models in safety-critical applications, with air-traffic control as a motivating domain.

Project overview

Generative AI technologies have led to transformative leaps in the capabilities of AI, but transformer-based models are known to suffer from hallucinations: responses that are incorrect, but may sound reasonable. They can also be vulnerable to prompt injection and jailbreaking. Such responses are not admissible in safety-critical applications such as air-traffic control.

The central question of this project is how developments from dynamical systems, control theory, and formal methods can provide safety and performance guarantees for transformer-based models. The work focuses on metrics for safety and robustness, representability of stability and safety guarantees by transformer architectures, and proof-of-concept testbeds for understanding how transformer models can perform low-level control in safety-critical applications.

Key highlights

Stability guarantees

The project provides methods to train transformer neural networks that achieve similar stability guarantees as in optimal control methods.

Low-level control

Transformer models were evaluated on low-level control of unstable, nonlinear systems where the context contains only unforced system dynamics and the system parameters are unknown to the transformer.

Robust training for in-context learning

The project shows how transformer-based controllers can learn corrective actions (with Gaussian noise injection) rather than only clean nominal behavior.

Failure metrics

Developed a hallucination-evaluation framework that classifies LLM failures into factual errors, speculative responses, logical fallacies, and improbable scenarios.

Main outcomes

1. In-context learning for low-level control

One of the remarkable abilities of transformer-based neural networks is in-context learning. This motivates the question: can we control a system knowing only that it belongs to a particular family of systems and some data on system evolution, but no specific parameter information?

Our CDC paper (Smith et al. 2026) studies a multi-head, GPT-2 style architecture for in-context control of unstable, nonlinear dynamical systems. The context consists only of the unforced, zero-torque system dynamics. At inference time, the transformer predicts the next state, controller mode, and control action, and the control action is applied to the plant in closed loop (see figure below).

Low-level stabilization is challenging because small perturbations in predicted control actions can lead to compounding errors and distribution shift. Transformer models also face numerical sensitivity when they must predict values that range from large magnitudes to small magnitudes, as in stabilizing control sequences. Additive Gaussian noise in training data forces the expert controller to generate corrective torques for perturbed states, so the transformer observes recovery behavior needed for closed-loop stability.

Experiments on the cartpole and acrobot benchmarks show that controller performance improves as context increases for trained transformers. With sufficient context, the transformer models outperform naive nominal baselines, and the results show cases of out-of-distribution stabilization. Ongoing work includes a more thorough comparison of how the transformer model behaves compared to other data-driven baseline methods.

2. Hallucination classification for safety-critical LLM use

The project proposes safety metrics for transformer-based models under worst-case disturbances such as prompt injection, jailbreaking, and intrinsic noise. The hallucination work contributes to this aim by introducing a weighted metric for detecting and categorizing LLM hallucinations in safety-sensitive applications.

The framework combines semantic similarity, F1, ROUGE, BLEU, and exact match scores, then uses a rubric to classify hallucinations into factual errors, speculative responses, logical fallacies, and improbable scenarios. On TruthfulQA with Falcon 7B, the metric identified 387 hallucinated responses and 430 non-hallucinated responses, closely matching a GPT-4-based evaluation.

Papers and reports

-

How do Transformers Perform Low-Level Control In-Context?

Ebonye Smith, Aidan Andrews, Phoenix Wilson, Alexis Frias, Ayush Pandey, and Gireeja Ranade. IEEE Conference on Decision and Control (under review). -

Classification of Hallucinations in Large Language Models Using a Novel Weighted Metric

Saaketh Raghava. UC Merced Undergraduate Research Journal, 17(1), 2024. DOI: 10.5070/M417164607. PDF. eScholarship.

Code

-

Transformer control experiments

Code for the in-context control experiments is available at github.com/ebonyelsmith/transformer_control.

Presentations

- Talk by Ayush Pandey at Lawrence Livermore National Lab (LLNL) workshop on AI safety: August 2025.

- Undergraduate student presentations: Aidan Andrews (UC Berkeley), Saaketh Raghava (UC Merced), and Axel Muniz Tello (UC Merced)

People

- UC Merced: Ayush Pandey, Saaketh Raghava, Axel Muniz Tello, Alex Frias (now graduate student at USC)

- UC Berkeley: Gireeja Ranade, Ebonye Smith, Phoenix Wilson, Aidan Andrews (NSF REU Scholar, undergraduate at UIUC)

Funding

This project is supported by a CITRIS and the Banatao Institute 2023 Seed Award.

Fetching last updated date...

The page consists of copyright material from publications that are either under review or published. Other than these, the rest of the content is free-to-use and can be shared with proper attribution. You can view the LICENSE here. You can contribute to this page by creating a pull request on GitHub.